I guess we’ve all heard about the “new” Agentforce from Salesforce. It’s like Einstein Copilot—but smarter! While Copilot focused on helping you analyze data and make decisions, Agentforce takes it a step further by performing tasks in real time. Imagine having an AI assistant that not only guides you but also acts for you, seamlessly improving productivity and efficiency.

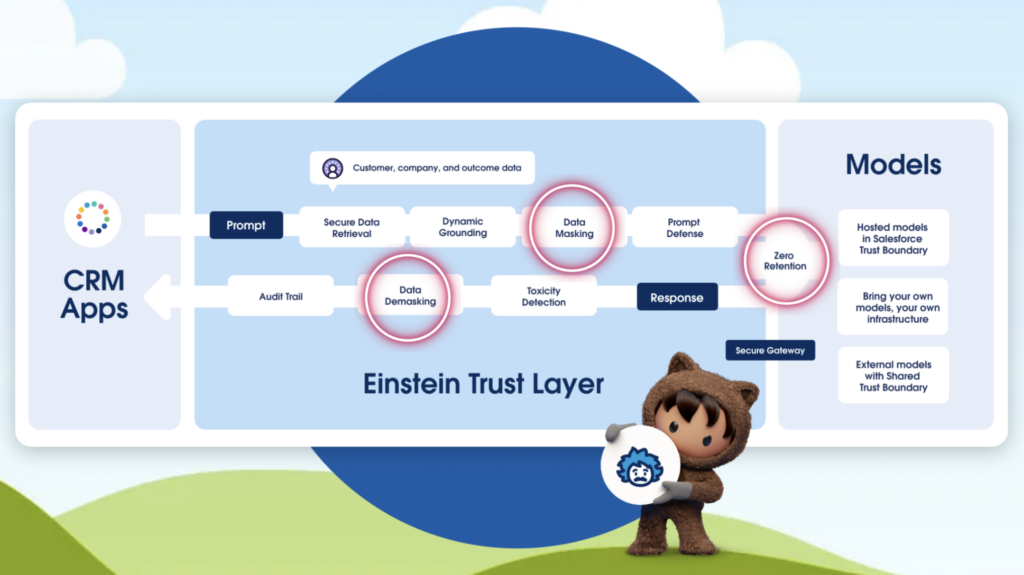

But with smarter AI comes the big question: What about data security? As businesses share more information with AI tools, protecting sensitive data becomes non-negotiable. That’s where Salesforce’s Einstein Trust Layer comes in. This robust security framework ensures your data remains safe, private, and compliant while you enjoy the full benefits of AI.

In this blog post, we’ll dive into how the Einstein Trust Layer keeps your data secure through Zero Data Retention, Data Masking, and Data Demasking—making AI adoption both powerful and safe. There is more to the Einstein Trust Layer, but for this blog let’s focus on these three parts of the trust layer.

Zero Data Retention: Your Data, Your Rules

Generative AI is only as useful as the data it processes. However, when sensitive data is involved, the risk of exposure can feel too high. That’s where Zero Data Retention comes in.

What It Means

Zero Data Retention ensures that no data sent to the large language model (LLM) is ever stored by the LLM provider. After the AI processes your prompt, it’s gone—forever. Additionally, your data is never used to train or improve the AI. This guarantees that proprietary and sensitive information stays under your organization’s control.

Why It Matters

- Protect Sensitive Information: Safeguard customer details, trade secrets, and other critical data from being stored or misused.

- Simplify Compliance: Easily adhere to strict regulations like GDPR, CCPA, and HIPAA.

- Build Customer Trust: Show customers that their privacy is your priority by ensuring their data is never exposed.

With Zero Data Retention, businesses can confidently use generative AI without worrying about data leaving their secure environment.

Data Masking: Keeping Sensitive Information Hidden

Before any prompt is sent to the LLM for processing, Salesforce applies Data Masking to ensure no sensitive information is exposed.

How It Works

- Identifying Sensitive Data: The system scans your data for personally identifiable information (PII) or other sensitive details, such as names, addresses, or account numbers.

- Replacing with Placeholders: Identified data is replaced with placeholders like “[Name]” or “[Account Number]” before being sent to the LLM.

By ensuring that the AI only sees anonymized data, Data Masking provides an extra layer of protection for your business and customers.

Data Demasking: Restoring Context Securely

Once the LLM processes the masked data and generates a response, Data Demasking takes place in Salesforce. This step reintegrates the original sensitive information, ensuring that the final output is accurate and complete.

How It Works

- Placeholder Handling: The LLM processes the anonymized input and creates a response.

- Reintegrating Sensitive Data: Salesforce securely replaces placeholders in the response with the original data, ensuring no context is lost.

This seamless transition ensures that sensitive data remains protected throughout the process, while the output remains meaningful and actionable.

Why These Features Matter Together

Salesforce’s combination of Zero Data Retention, Data Masking, and Data Demasking offers a holistic approach to secure and responsible AI use. Together, they provide:

- Data Privacy: Your data never leaves your control, and sensitive information is shielded from unnecessary exposure.

- Regulatory Compliance: These features simplify adherence to data privacy laws by ensuring data security at every step.

- Business Confidence: By prioritizing security and transparency, Salesforce empowers businesses to leverage AI without compromising trust or integrity.